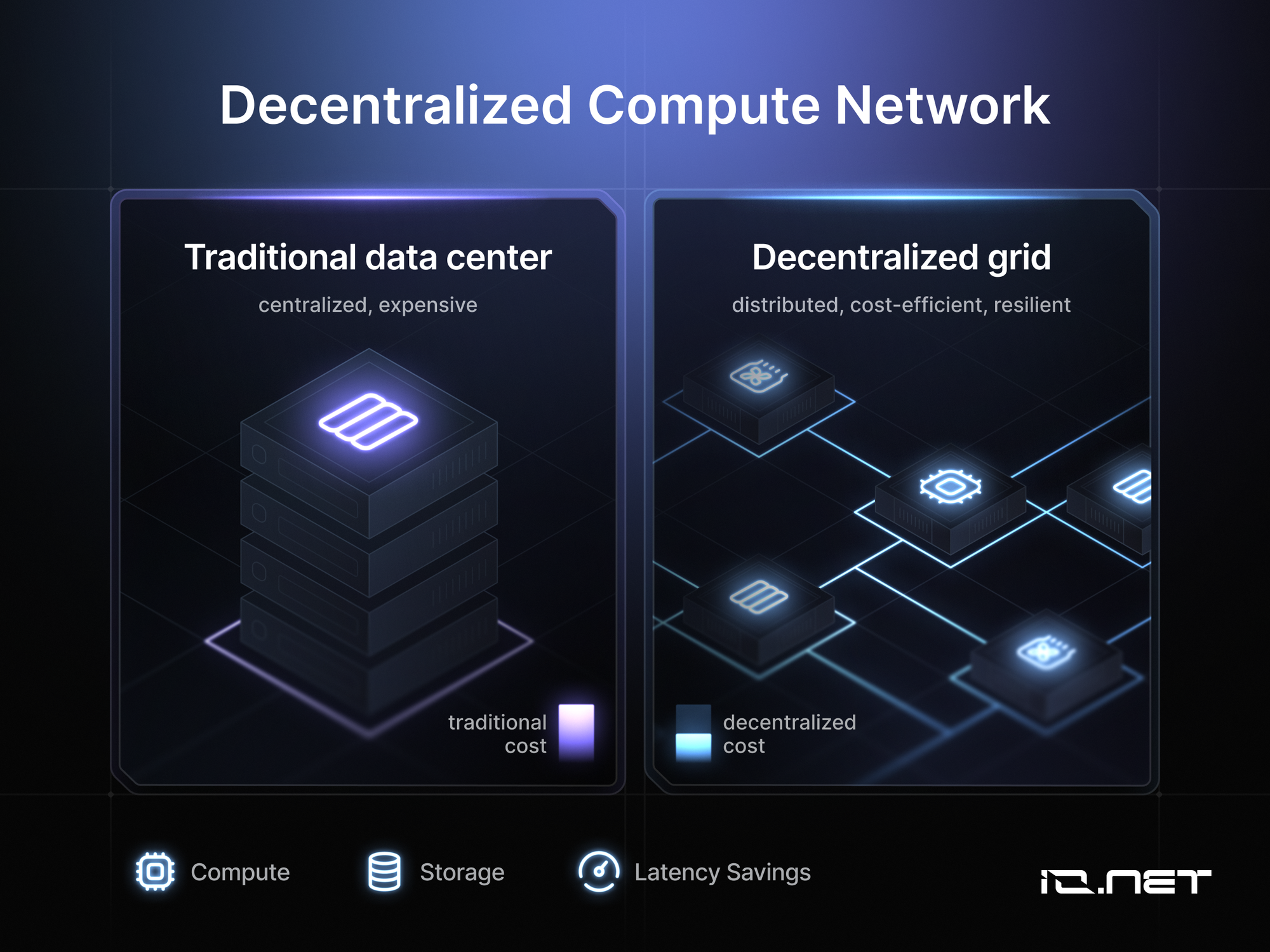

As AI models grow more sophisticated, the demand for GPU compute has skyrocketed, leaving developers grappling with skyrocketing costs and supply shortages from centralized hyperscalers. Tokenizing GPU resources in decentralized inference markets offers a compelling alternative, transforming idle hardware worldwide into a liquid, tradable asset class. This shift not only democratizes access to high-performance computing but also aligns incentives through AI compute tokens, fostering sustainable networks for inference tasks.

The Rise of Tokenized GPU Inference Networks

Platforms like io. net and Aethir are at the forefront of this transformation. io. net, an AI-native network, aggregates decentralized GPU clusters, delivering compute at a fraction of traditional costs. By October 2025, it reported over $20 million in annualized revenue, bolstered by integrations with Gaia and Injective. Averaging 6,720 verified GPUs in early 2025, io. net exemplifies how tokenized GPU inference scales efficiently. Aethir complements this with enterprise-grade services, achieving $39.8 million in Q3 2025 revenue and up to 86% cost savings. Its $191 million market cap and over 100 partners underscore growing adoption.

Render Network has pivoted from rendering to AI inference, dedicating 40% of capacity by late 2025. With RNDR trading at $3.80 and robust 24-hour volumes of $53-62 million, it signals investor conviction in decentralized AI GPU markets. Newer entrants like NodeGoAI and Kaisar Network further expand options, enabling peer-to-peer monetization of underutilized GPUs for training and inference.

Key Platforms Driving Blockchain Inference Trading

Key Decentralized GPU Platforms

- io.net: AI-native network providing decentralized GPU clusters at a fraction of hyperscaler costs, averaging 6,720 verified GPUs in Q1 2025 and over $20M annualized revenue by Oct 2025.

- Aethir (ATH): Enterprise-grade GPU cloud offering up to 86% cost savings, with $191M market cap and Q3 2025 revenue of $39.8M.

- Render Network (RNDR $3.80): Expanded from rendering to AI inference, allocating 40% of compute capacity by late 2025.

- NodeGoAI: P2P platform enabling monetization of unused computing power for AI applications, founded in 2021.

- Kaisar Network: Decentralized platform for AI-optimized renting of distributed GPU resources, established in 2024.

These networks tokenize GPU power, allowing suppliers to earn tokens for contributions while developers bid in real-time auctions. This blockchain inference trading mechanism ensures transparency and verifiability, critical for production-grade AI. For instance, GAIB's partnership with Aethir launched Web3's first tokenized GPU product, creating liquid markets for financing high-performance assets. AI Pulse's GDePIN targets Western markets, leasing resources without centralized intermediaries.

From a fundamental perspective, these developments merit scrutiny. Token utilities must extend beyond speculation; strong networks tie rewards to verifiable compute delivery, mitigating risks seen in earlier DePIN projects. Whitepapers reveal varied approaches: io. net emphasizes cluster orchestration, while Render leverages its rendering heritage for inference efficiency.

Comparative Analysis of Leading Providers

Comparison of Leading Decentralized GPU Platforms

| Platform | Key Stats | Strengths |

|---|---|---|

| io.net | 6,720 verified GPUs (Q1 2025), $20M annualized revenue | AI-native network with decentralized GPU clusters at a fraction of hyperscaler costs; integrations like Gaia and Injective for real-world apps |

| Aethir (ATH) | $39.8M Q3 revenue, $191M market cap, up to 86% cost savings vs. centralized clouds | Enterprise-grade GPU cloud; 100+ ecosystem partners; Q4 2025 mainnet upgrade for compatibility and security |

| Render Network (RNDR) | RNDR $3.80, 40% compute capacity for AI inference, $53–62M 24h volume | Expanded from rendering to AI tasks; strong market confidence and liquidity |

Diversification across these platforms reduces reliance on any single provider. io. net's revenue trajectory suggests undervaluation if adoption accelerates, yet conservative investors should monitor mainnet upgrades like Aethir's Q4 enhancements for security and compatibility. Render's $3.80 price reflects market confidence, but sustained volumes are key to long-term holds.

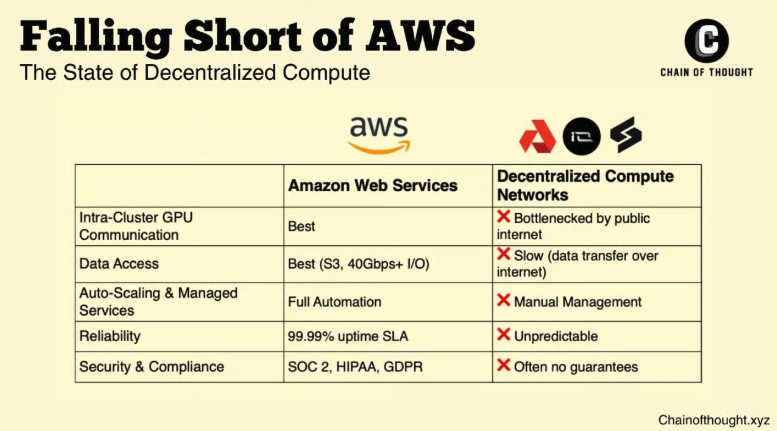

This tokenization unlocks global idle GPUs, bypassing bottlenecks. Developers gain flexible access, paying via tokens that capture network value accrual. Yet, challenges persist: ensuring low-latency inference and robust slashing for poor performance. As these markets mature, they promise a more resilient AI infrastructure, rewarding patient capital over fleeting hype. For deeper insights into how decentralized GPU tokenization powers AI compute, consider the evolving economics.

Tokenomics form the bedrock of sustainable growth in these decentralized inference markets. Strong protocols link token value to actual compute utilization, avoiding the dilution pitfalls of pure speculative plays. io. net's model, for example, rewards verified GPU contributions through staking and fees, creating aligned incentives. Aethir's ATH token facilitates governance and revenue sharing, with its $191 million market cap reflecting real enterprise traction rather than hype. Render's RNDR at $3.80 benefits from dual utility in rendering and inference, its $53-62 million daily volumes indicating liquidity without excess volatility.

Risks and Realities in Tokenized GPU Ecosystems

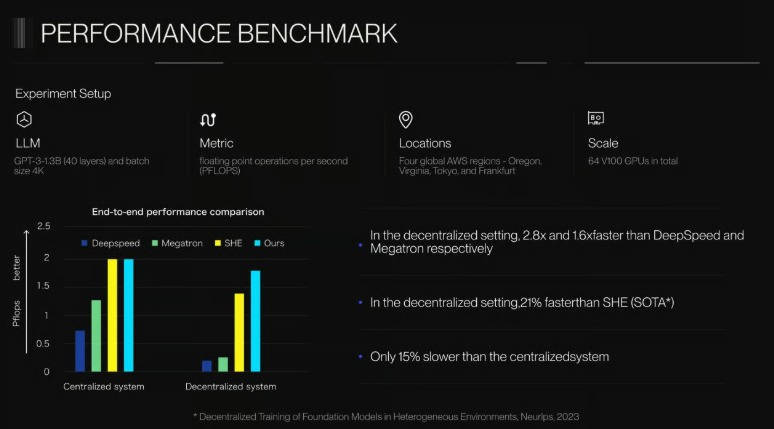

Conservative analysis demands attention to execution risks. Latency remains a hurdle for real-time inference; while networks like Render allocate capacity efficiently, global distribution introduces variability. Slashing mechanisms, essential for accountability, must be finely tuned to avoid over-penalizing honest providers. The arXiv review of AI-token projects highlights variances in consensus and utility, urging developers to prioritize networks with proven uptime and verifiable proofs.

Regulatory shadows loom as well. As tokenized GPU markets scale, clarity on securities classification could impact liquidity. Yet, blockchain's transparency offers a counterbalance, enabling auditable compute delivery. Platforms addressing these through modular upgrades, like Aethir's Q4 mainnet, position themselves for longevity. NodeGoAI and Kaisar Network, though nascent, show promise in niche P2P models, but their tokenomics warrant whitepaper deep dives before commitment.

Strategic Plays for AI Developers and Investors

For developers, these decentralized AI GPU markets mean bidding on compute via AI compute tokens, slashing costs by 70-86% per reports from Aethir and io. net. Integrate with Gaia or Injective for seamless Web3 AI apps. Investors, build conviction through metrics: revenue growth, GPU verification rates, partner ecosystems. Diversify across io. net's clusters, Aethir's enterprise focus, and Render's inference pivot. Avoid FOMO; RNDR's steady $3.80 holds and volumes suggest resilience, but pair with stable DePIN bonds for ballast.

GAIB and Aethir's tokenized GPU product pioneers liquid financing, letting holders gain exposure without hardware ownership. This fractionalizes barriers, drawing capital to underutilized assets. As idle GPUs worldwide activate, supply elasticity meets surging demand, stabilizing prices long-term. GDePIN's Western focus complements global players, fostering regional resilience.

Examine decentralized GPU networks powering scalable AI reveals efficiencies in training and inference. io. net's 6,720 GPUs and $20 million revenue trajectory hint at undervaluation, meriting watchlists. Aethir's 100 and partners signal network effects kicking in.

Ultimately, tokenized GPU resources cultivate permissionless innovation. Developers access scalable inference without vendor lock-in; suppliers monetize assets securely. Patient capital, rooted in fundamentals, will harvest rewards as blockchain inference trading matures into infrastructure bedrock. Monitor upgrades and integrations; conviction here trumps chasing peaks.

No comments yet. Be the first to share your thoughts!