In the evolving landscape of decentralized inference markets, where AI models must prove their integrity without centralized trust, Inference Labs has introduced DSperse as a pivotal innovation. This framework tackles the computational hurdles of generating zero-knowledge proofs (ZKPs) for large machine learning models by intelligently slicing them into manageable segments. What sets DSperse apart is its pragmatic design, enabling verifiable AI inference on blockchain infrastructures while slashing latency and resource demands. As investors eye sustainable growth in projects like these, DSperse positions Inference Labs at the forefront of proof of inference decentralized AI.

The Mechanics of Model Slicing in DSperse

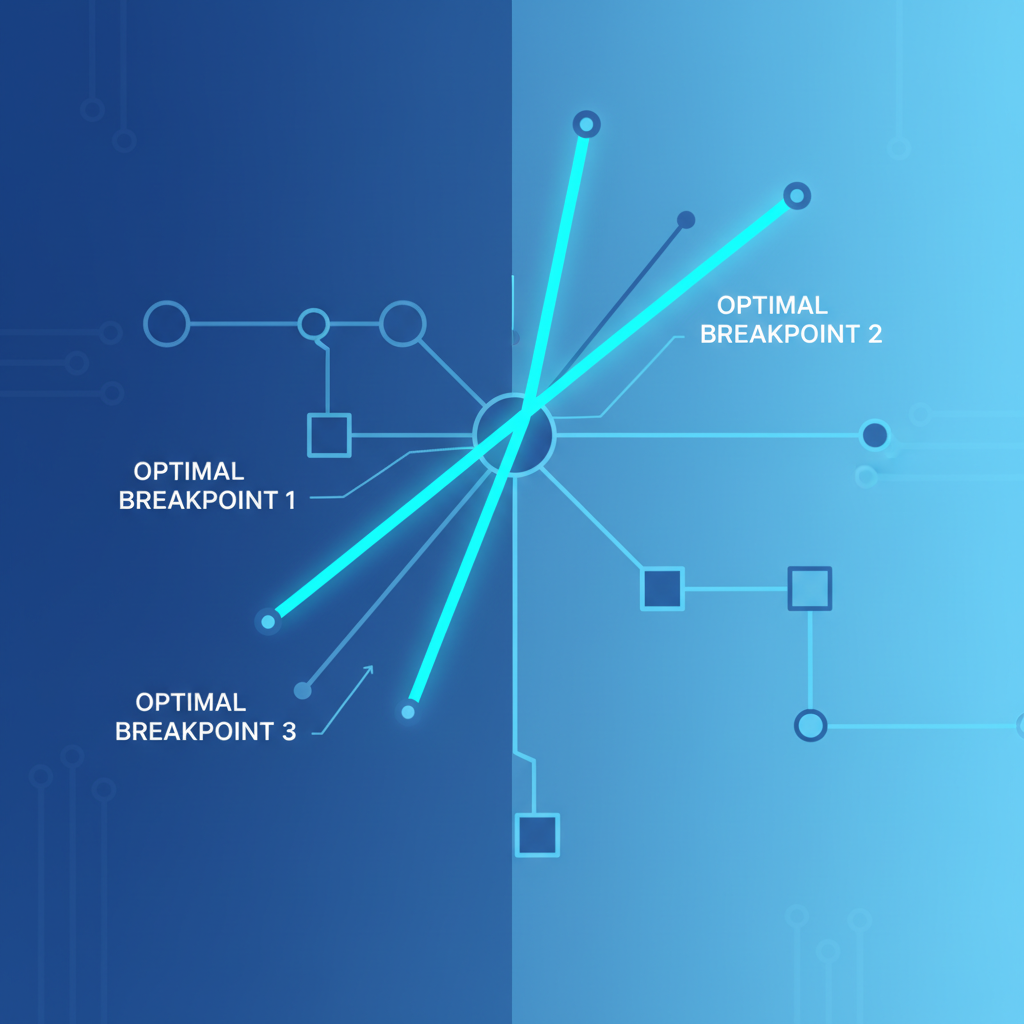

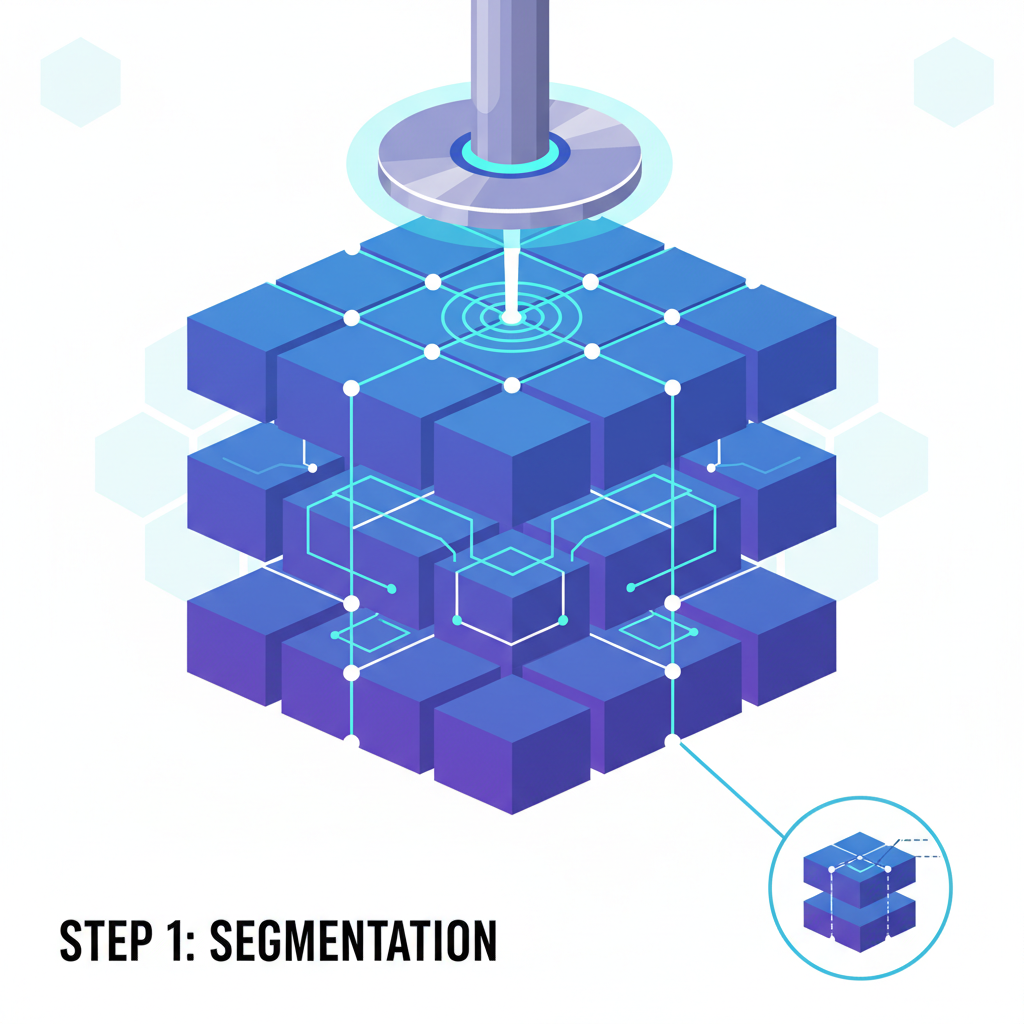

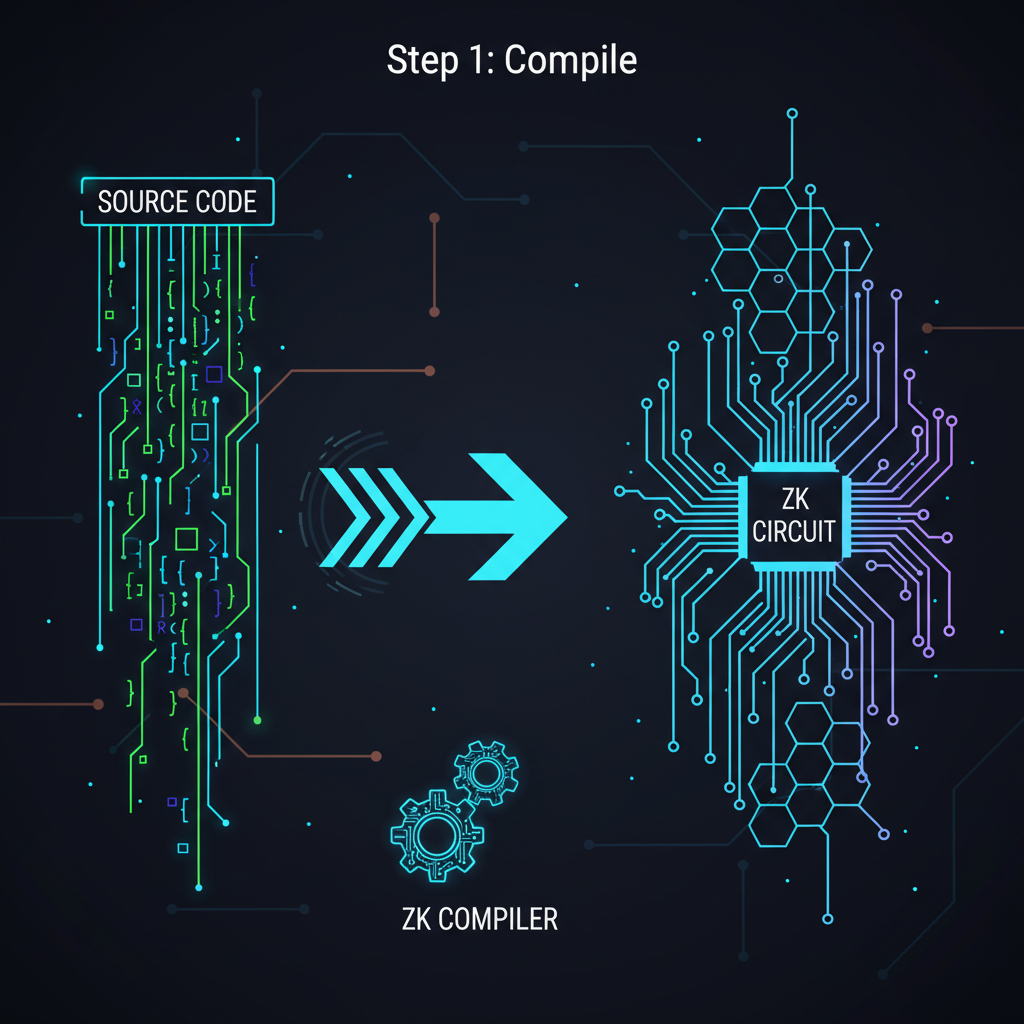

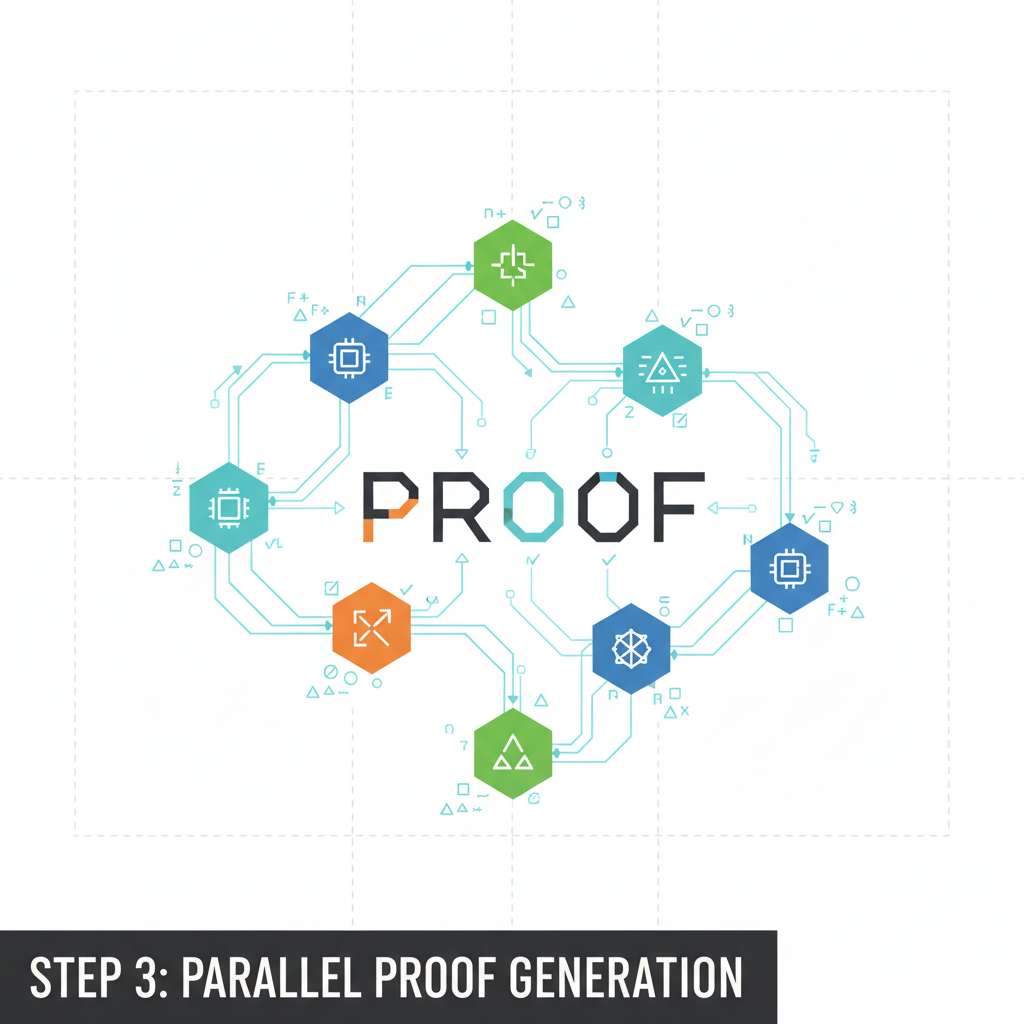

Traditional zero-knowledge machine learning (zkML) struggles with the sheer scale of modern AI models, often demanding gigabytes of memory and hours for proof generation. DSperse disrupts this by dissecting models at optimal breakpoints, creating independent slices compiled into DSIL files. Each slice undergoes execution and proof generation separately, allowing parallel processing across distributed nodes.

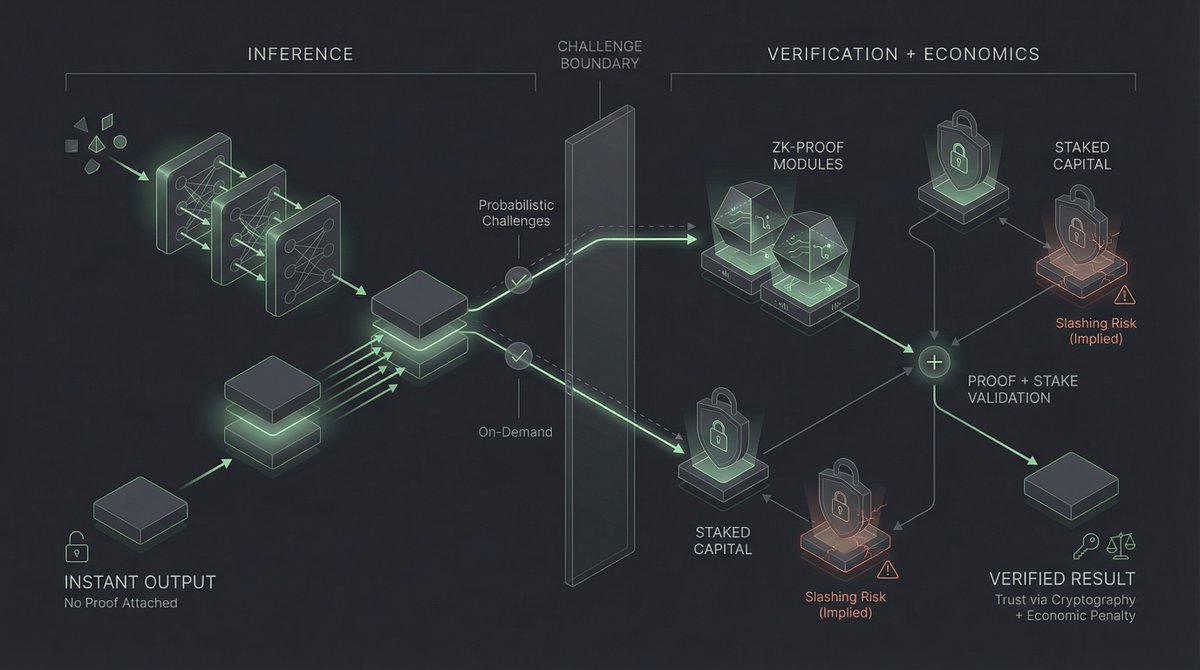

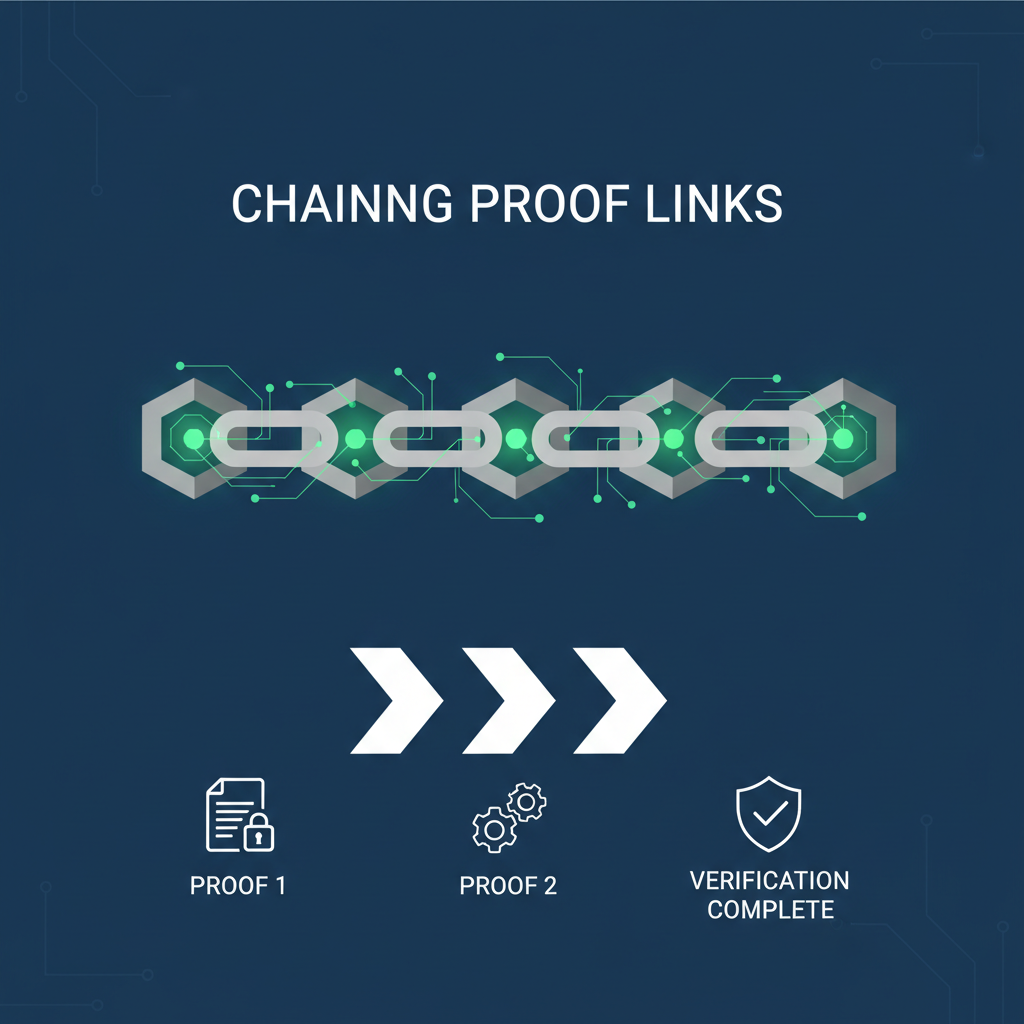

This distributed approach leverages ZK backends where feasible, with graceful fallbacks to ONNX runtime for resilience. The result? A chained execution that maintains end-to-end verifiability without compromising model fidelity. From the arXiv paper on DSperse, it's clear this framework prioritizes targeted verification over full-model proofs, ideal for decentralized environments where node resources vary widely.

Inference Labs' engineering shines here: they've optimized for real-world deployment, processing over 281 million zkML proofs by August 2025. This isn't theoretical; it's production-grade scalability that transforms sliced model proofs zkML from concept to commodity.

Performance Gains Redefining Verifiable AI Inference

DSperse 2.0 delivers concrete metrics that demand attention. Inference Labs reports a 65% boost in proof speeds alongside memory usage under 1GB per proof. These advancements stem from refined circuit compilation and node orchestration within their Inference Network Runtime, which securely executes AI tasks and auto-generates cryptographic attestations.

Consider the implications for verifiable AI inference blockchain applications. In a network like EigenLayer's AVS, where DSperse integrates via ZK-VIN, operators can now verify complex inferences on-chain without black-box risks. Provers confirm exact model weights, untampered inputs, and correct outputs, fostering trust in decentralized AI marketplaces.

Recent partnerships amplify this. Collaborating with Lagrange on DeepProve zkML library, Inference Labs bolsters ecosystem standards. Their $6.3 million raise underscores market conviction, funding a cryptographic trust layer for AI agents and off-chain compute. With Proof of Inference live on testnet and mainnet eyed for late Q3 2025, momentum builds toward decentralized inference markets 2026.

Strategic Positioning in the zkML Landscape

Inference Labs' GitHub repository for DSperse reveals a mature toolchain: commands like 'dsperse run' chain slices with hybrid ZK/ONNX execution, minimizing failures in heterogeneous networks. This robustness appeals to AI developers seeking reliable oracles for any computation.

From an investment lens, projects emphasizing fundamentals like these outperform hype-driven tokens. DSperse's verifiable oracles on Subnet 2 exemplify sustainable architecture, attracting compute providers and verifiers to tokenized inference economies. Equilibrium. co's state-of-verifiable-inference analysis aligns, highlighting how such slicing proves model correctness without full recomputation.

As decentralized networks scale, DSperse's slice-and-prove paradigm lowers barriers, enabling broader participation in AI inference trading. This shift from centralized bottlenecks to distributed trust layers promises enduring value for long-term holders.

Yet, the true test lies in adoption and integration. Inference Labs' DSperse doesn't just theorize efficiency; it equips builders with tools for immediate impact in proof of inference decentralized AI.

Once sliced, proofs generate in parallel, slashing times from hours to minutes. For a Llama-3 variant, DSperse achieves sub-second inferences with cryptographic guarantees. This practicality draws AI agents needing off-chain compute verified on-chain, a cornerstone for autonomous economies.

Inference Labs' testnet Proof of Inference already hums with activity, processing tasks from image recognition to natural language processing. Mainnet's late Q3 2025 arrival will unlock tokenized incentives, rewarding provers and compute nodes in a merit-based marketplace.

Bittensor Technical Analysis Chart

Analysis by John Smith | Symbol: BINANCE:TAOUSDT | Interval: 1D | Drawings: 6

Technical Analysis Summary

As a conservative fundamental analyst, I recommend drawing a prominent downtrend line connecting the recent highs from mid-November 2026 to the sharp drop in late December 2026, using the 'trend_line' tool in red with medium thickness. Add horizontal support at 10.3 (recent low) and resistance at 18.5 (prior swing low), both as 'horizontal_line' in dashed style. Mark the breakdown zone with a 'rectangle' from 2026-12-15 to present, spanning 12-25 price levels. Use 'arrow_mark_down' at the MACD bearish crossover point around early December. Place 'callout' texts for volume divergence and key support levels. Fib retracement from the June 2026 peak to current low for potential pullback zones. Vertical line for the news-impacted drop tied to Inference Labs updates.

Risk Assessment: high

Analysis: Volatile breakdown with decoupling from fundamentals; low liquidity signals high slippage risk in DeFi context.

John Smith's Recommendation: Stay sidelined, monitor for liquidity inflow via cross-chain metrics before entry. Diversify portfolio away from single-token bets.

Key Support & Resistance Levels

📈 Support Levels:

- $10.3 - Recent panic low; potential bounce if volume picks up, but weak without fundamentals. weak

- $12.8 - Prior swing low from October; moderate hold if reclaimed. moderate

📉 Resistance Levels:

- $18.5 - Broken support now resistance; key level for any recovery. moderate

- $25 - November consolidation high; strong overhead barrier. strong

Trading Zones (low risk tolerance)

🎯 Entry Zones:

- $11.2 - Dip buy near support if volume divergence confirms reversal, aligned with low-risk tolerance. medium risk

- $10.3 - Ultimate support test; only on bullish candle close with news catalyst. high risk

🚪 Exit Zones:

- $15.5 - Initial profit target at minor resistance. 💰 profit target

- $9.5 - Tight stop below panic low to preserve capital. 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: decreasing on breakdown

Volume fading on downside move suggests exhaustion, potential for base building.

📈 MACD Analysis:

Signal: bearish crossover

MACD line crossed below signal in early December, aligning with price drop.

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by John Smith is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (low).

Ecosystem Synergies and Long-Term Value

DSperse thrives amid synergies. EigenLayer's restaking secures ZK-VIN, while Lagrange's DeepProve sharpens zkML primitives. These alliances mitigate oracle risks, proving not just outputs but entire inference pipelines. Equilibrium. co notes this verifies model weights and input integrity, essential as AI scales to trillion-parameter behemoths.

From a fundamental standpoint, Inference Labs embodies my investment philosophy: patience rewards architecture over memes. Their 281 million proofs signal network effects kicking in, with DSperse 2.0's DSIL files enabling modular upgrades. In decentralized inference markets 2026, expect DSperse to anchor protocols trading inference compute, much like Bittensor tokenizes intelligence.

Challenges persist - circuit optimization for novel architectures like MoE models demands iteration. Yet, Inference Labs' $6.3 million war chest and open-source ethos position them to iterate swiftly. Verifiers gain from low-cost proofs; developers from plug-and-play oracles; investors from captured value in verifiable AI.

Picture marketplaces where slices trade as NFTs, proofs as attestations, and agents bid for compute. DSperse engineers this reality, bridging Web2 AI potency with Web3 trustlessness. For those charting crypto's next leg, Inference Labs DSperse merits a core allocation - fundamentals like these endure volatility.

The trajectory points upward: as ZK tech matures, sliced proofs commoditize verifiable AI inference blockchain, democratizing access. Inference Labs isn't chasing hype; they're forging infrastructure that outlasts it.

No comments yet. Be the first to share your thoughts!