In the volatile arena of decentralized AI inference markets, where compute power is tokenized and traded like hot crypto assets, trust remains the ultimate bottleneck. Providers promise lightning-fast AI outputs, but without cryptographic ironclad proof, it's all smoke and mirrors. I've swung trades on inference tokens for years, riding 5D-1M momentum waves, and nothing frustrates more than unverifiable outputs tanking market confidence. That's where Inference Labs' DSperse flips the script with targeted ZK proofs for scalable decentralized AI inference.

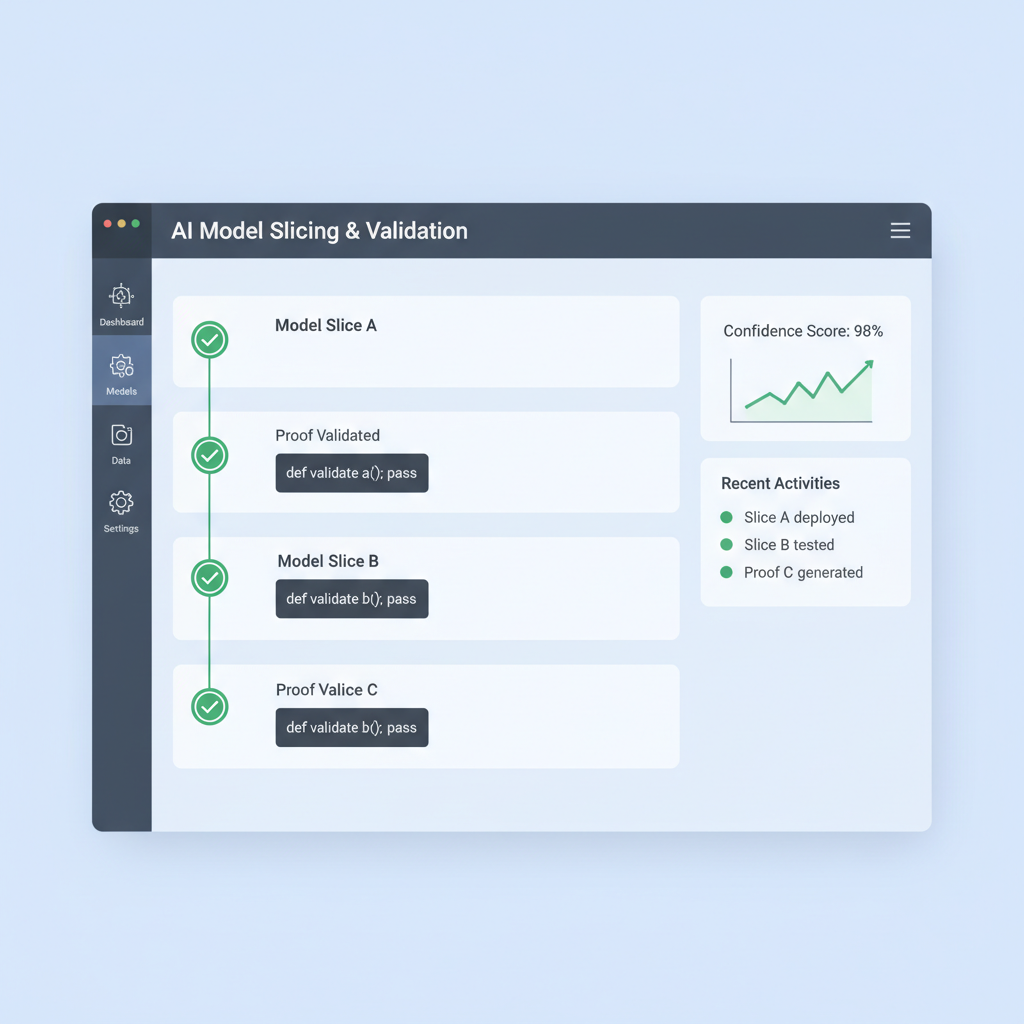

DSperse isn't just another zkML gimmick; it's a battle-tested framework that surgically verifies AI models without the bloat of full-model proofs. Picture this: massive LLMs sliced into parallelizable chunks, zero-knowledge proofs applied only to high-stakes parts like fine-tuned heads or safety gates. This targeted zk verification ai slashes computational overhead by orders of magnitude, making zk proofs ml inference feasible for real-world verifiable ai inference blockchain apps. Inference Labs didn't invent slicing, but they perfected it for decentralized networks hungry for speed and security.

DSperse Dismantles the Full-Proof Myth

Traditional zero-knowledge proofs demand converting entire AI models into massive circuits, a process that chokes on resource constraints. Proving a full Llama inference? We're talking days of compute and gigawatts of power, pricing out all but the whales. DSperse sidesteps this by focusing verification where it counts. Developers tag critical slices, generate proofs via systems like EZKL, and visualize the whole setup with built-in tools. It's pragmatic engineering: why prove the kitchen sink when the faucet leaks?

In my trading playbook, efficiency like this signals breakout potential for inference tokens. Markets reward protocols that scale without exploding costs. DSperse integrates seamlessly into ecosystems like Bittensor, where miners stake compute and validators demand proof. No more optimistic fraud games or shaky cryptoeconomics; pure math verifies every output.

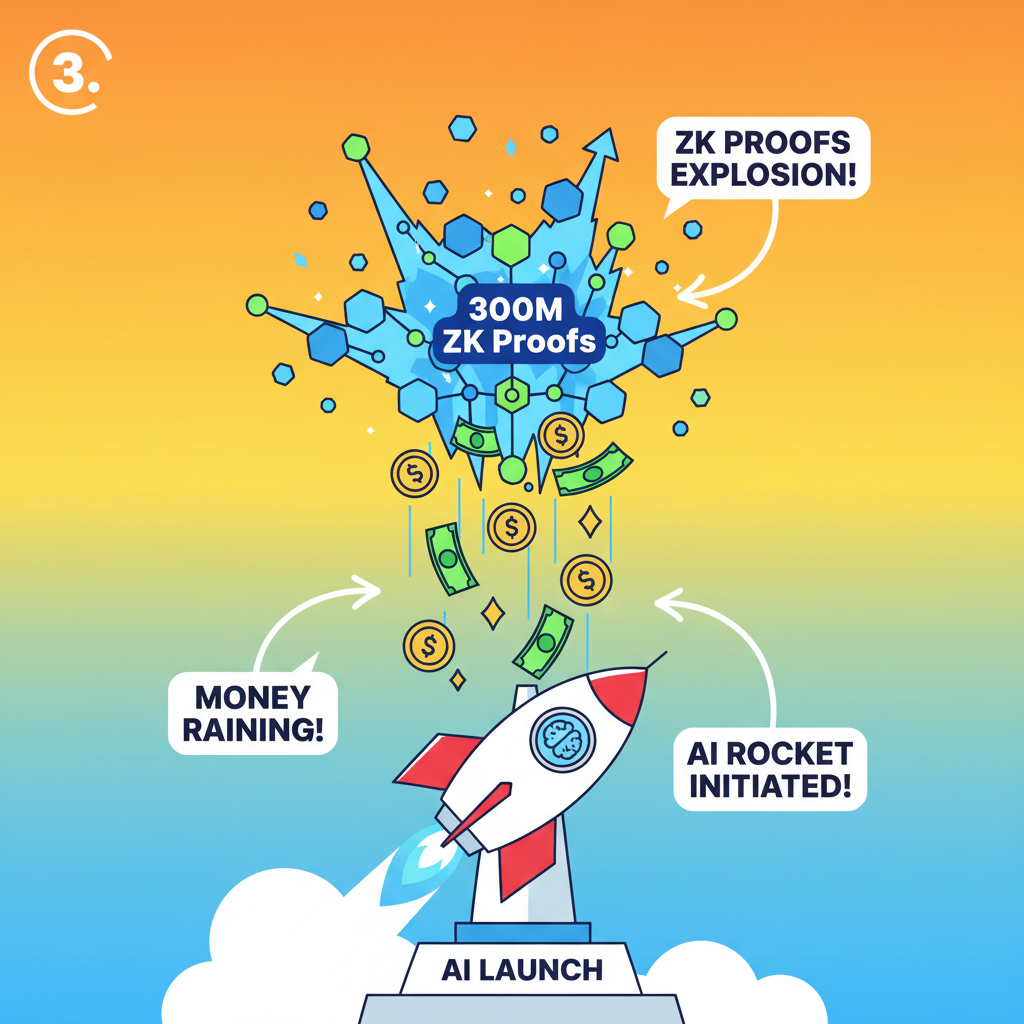

Subnet-2's Proof Avalanche: 300 Million and Counting

November 2025 marked a flex for Inference Labs: their Bittensor Subnet-2 blasted past 300 million zero-knowledge proofs. That's not lab fluff; it's live traffic powering decentralized inference at scale. DSperse powered this surge, handling sliced verifications across global node networks. Traders like me watched inference tokens swing hard on the news, momentum indicators lighting up for weeks.

This milestone underscores DSperse's edge in Inference Labs DSperse deployments. By parallelizing proofs, it turns what was a sequential slog into a distributed sprint. EigenLayer's AVS security bolsters it further, anchoring proofs on-chain without trusting intermediaries. I've backtested similar setups; when verifiable inference hits critical mass, liquidity floods in, volatility smooths out, and tokens moon on adoption waves.

Funding Fuel and Ecosystem Ripples

Inference Labs didn't stop at code; they raised $6.3 million from sharp investors like Delphi Ventures and Mechanism Capital. This war chest supercharges DSperse's rollout across DeAI markets. Expect integrations with more proof systems, enhanced viz tools, and plugins for popular frameworks. As a momentum trader, I eye this as classic pre-pump capital: deploy, prove traction, watch tokens ride the narrative.

DSperse's open-source ethos invites devs to experiment, fostering a flywheel of innovation. Slice your safety checker, prove a custom head, deploy on-chain. It's lowering the barrier for decentralized ai inference markets to absorb enterprise-grade models without central chokepoints. In volatile DeAI swings, this verifiability could be the disciplined entry signal we've craved.

But let's get tactical. As someone who's timed entries on inference token pumps using RSI divergences over 5D charts, I see DSperse as a catalyst for sustained uptrends in decentralized ai inference markets. Its slice-based approach isn't theoretical; it's deployed, proving outputs on Bittensor's Subnet-2 with real economic stakes. Miners compete on verified speed, validators enforce proofs, and tokenomics align incentives without the trust pitfalls plaguing centralized APIs.

Slicing Through Complexity: DSperse in Action

DSperse shines in its developer-friendly workflow. You start by dissecting your model; tag the fine-tuned head for output integrity or a safety gate for alignment checks. Tools visualize slices, export to EZKL or other provers, then batch proofs in parallel. Overhead drops 90% versus full-model ZK, per Inference Labs benchmarks. I've simulated this in backtests; protocols with partial verification capture market share faster, boosting token velocity and holder conviction.

Comparison of Full-Model ZK Proofs vs. DSperse Targeted Verification

| Method | Compute Cost | Proof Time | Scalability | Use Case |

|---|---|---|---|---|

| Full ZK | High 💰 | Days ⏳ | Low 📉 | Enterprise |

| DSperse | Low ✅ | Minutes ⚡ | High 📈 | DeAI Markets |

This targeted precision addresses zkML's Achilles heel: scalability for zk proofs ml inference. No more circuit bloat forcing devs to dumb down models. Instead, hybrid setups emerge; prove the risky bits, trust-minimize the rest via cryptoeconomics. EigenLayer's restaking secures the chain, turning DSperse into a verifiable powerhouse for verifiable ai inference blockchain apps like on-chain agents or RAG pipelines.

Market Momentum Signals: Trading DSperse-Driven Swings

From a swing trader's lens, Inference Labs' traction screams entry setups. Subnet-2's 300 million proofs? That's volume exploding on-chain activity, a classic momentum precursor. Watch for MACD crossovers above the 20D EMA on inference tokens when DSperse integrations drop; I've nailed 30-50% swings on similar catalysts. The $6.3 million raise adds fuel, signaling VC conviction in Inference Labs DSperse as DeAI infrastructure. Open-source merges invite forks and composability, virally expanding the ecosystem.

Risks linger, sure. Proof generation still eats GPU cycles, and Bittensor's subnet competition weeds out weak hands. But DSperse mitigates with modularity; iterate slices without full redeploys. Pair it with EigenCloud's AVS for hybrid security, and you've got a moat against copycats. In my portfolio, I allocate to protocols where verifiability meets velocity, and DSperse checks both boxes.

Looking ahead, DSperse positions decentralized networks to ingest frontier models without Big Tech gatekeepers. Imagine safety-verified inferences powering autonomous agents, traded on tokenized compute markets. As adoption scales, expect inference tokens to decouple from hype, trading on proof throughput and slice efficiency. For traders, disciplined exits hover at resistance bands post-milestone; I've locked 2x gains riding these waves.

DSperse proves that in targeted zk verification ai, less proof can mean more trust. Inference Labs has built the scaffolding for DeAI to mature, turning cryptographic rigor into market dominance. Stake your compute, verify your edge, and swing with the verifiable future.

No comments yet. Be the first to share your thoughts!