Scalable AI Inference Pipelines Reducing Noise in Blockchain Data

In the labyrinth of blockchain data, where transactions cascade in relentless volume, noise drowns out signal. Scalable AI inference pipelines emerge as the precision scalpel, carving clarity from chaos. These decentralized architectures, powered by verifiable computations, transform raw ledger noise into actionable verifiable blockchain insights, fueling inference markets with unassailable integrity.

Proof of Inference: Anchoring Trust in Decentralized AI

Inference Labs stands at the vanguard, pioneering Proof of Inference to cryptographically secure every AI output. No longer do we rely on opaque assurances; mathematical proofs render outputs provable, replayable, and auditable. This shift from blind trust to empirical verification resonates deeply in AI inference blockchain data ecosystems, where stakes run high in prediction markets and tokenized compute.

Their collaboration with VentureVerse injects verifiable AI into real-world prediction markets, while partnerships like Cysic deploy zero-knowledge proving on decentralized compute networks. EigenLayer’s security bolsters their Zero-Knowledge Verified Inference Network, creating a fortress for on-chain intelligence. As a portfolio manager attuned to inference staking yields, I see this as a multiplier for alpha: verifiable outputs minimize disputes, stabilize yields, and attract institutional capital wary of black-box risks.

Yet, Inference Labs transcends mere “AI on crypto. ” Their framework dissects end-to-end pipelines, analyzing cryptographic primitives to ensure scalability without sacrificing privacy. In my view, this positions them as the linchpin for decentralized data pipelines, where noise reduction hinges on tamper-proof execution.

Scalable Frameworks Reshaping Blockchain Analytics

Recent breakthroughs underscore the momentum. The AutoDFL framework leverages zk-Rollups for reputation-aware federated learning, clocking over 3000 transactions per second with slashed gas costs. This Layer-2 alchemy incentivizes participants via automated reputation models, proving scalable AI can thrive in adversarial environments.

Key Scalable AI Inference Advancements

-

AutoDFL Framework: Reputation-aware decentralized federated learning leveraging zk-Rollups for Layer-2 scaling, achieving over 3000 TPS with gas reductions. [arXiv]

-

Model Agnostic Hybrid Sharding: Privacy-preserving sharding for decentralized AI inference, distributing tasks across nodes for large models on consumer hardware. [arXiv]

-

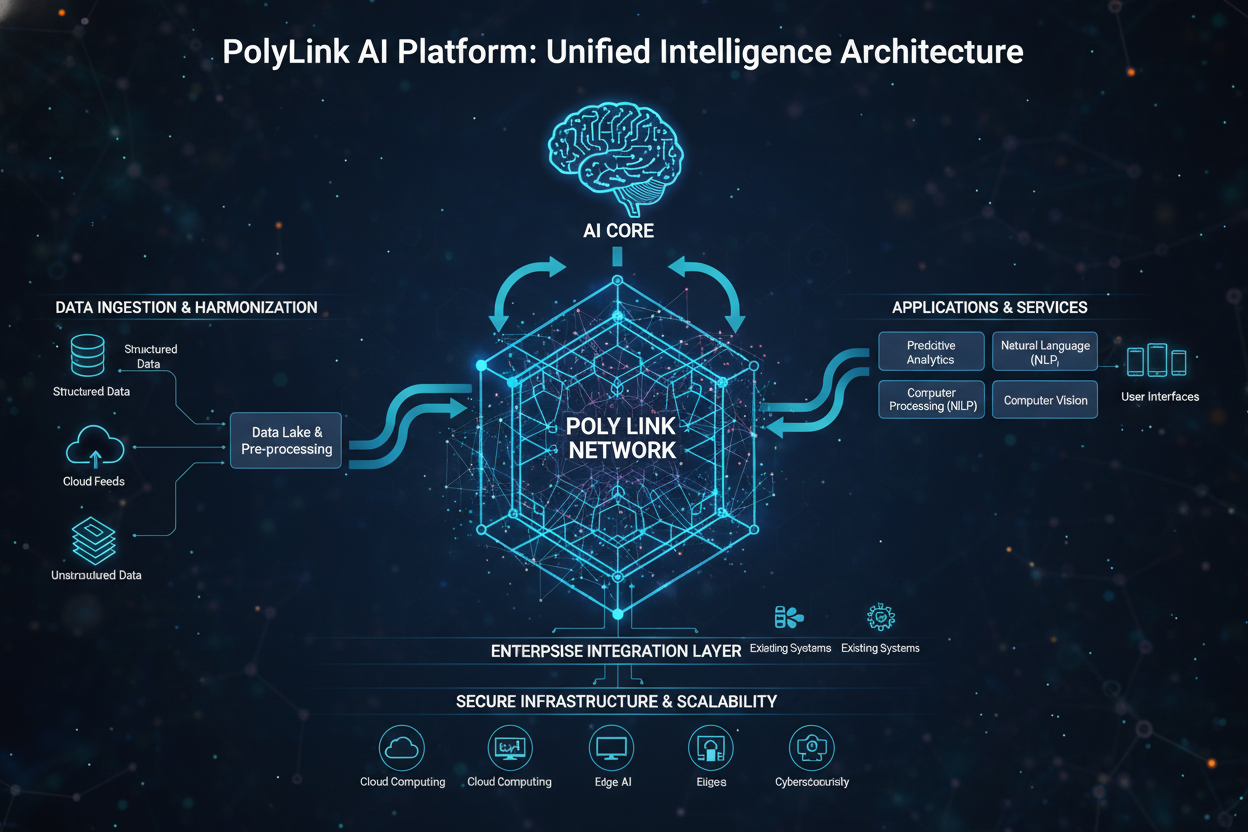

PolyLink Platform: Crowdsourced LLM inference on heterogeneous devices, secured by TIQE protocol and token incentives. [arXiv]

-

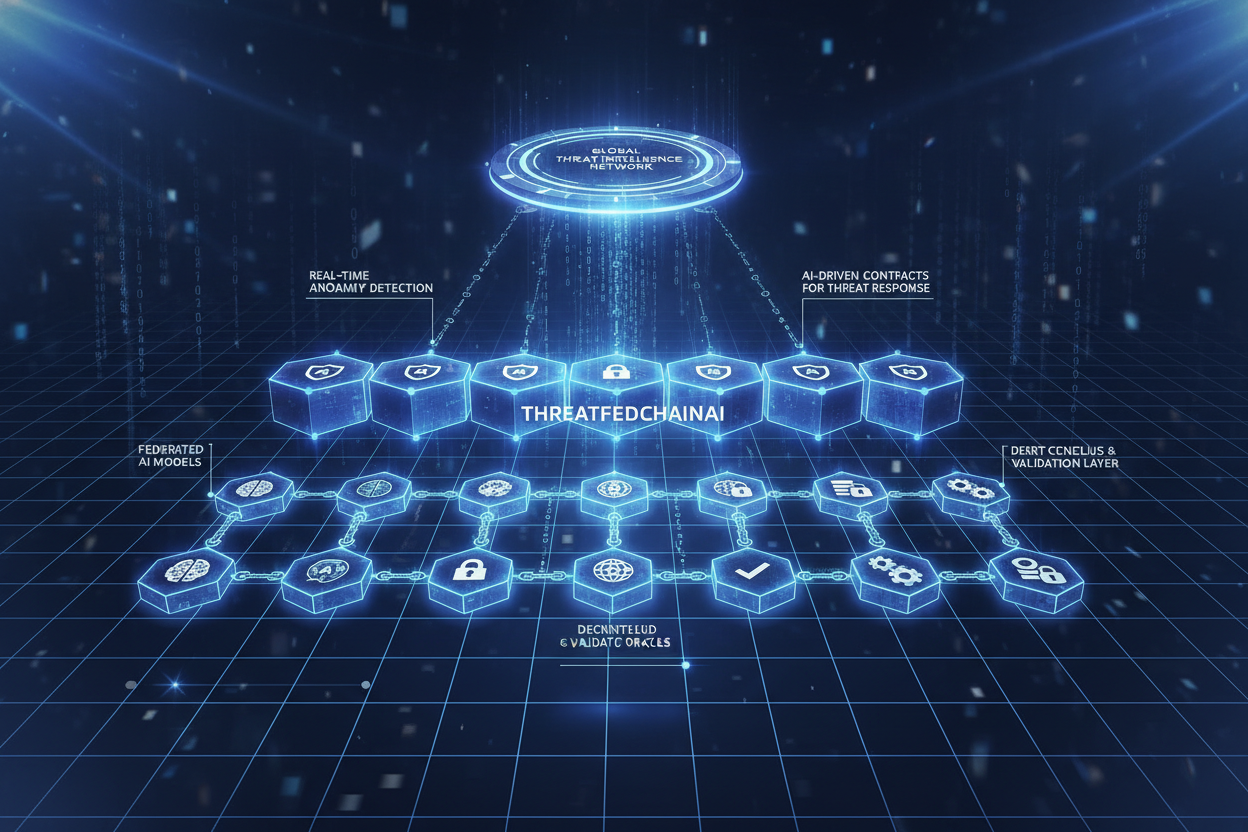

ThreatFedChainAI Architecture: Federated learning and blockchain for real-time IoT threat detection with quantum optimization and explainable AI. [Nature]

-

AI-Based Anomaly Detection Framework: Optimized CNNs using NAS, transfer learning, and compression for blockchain anomaly analysis. [MDPI]

Model Agnostic Hybrid Sharding tackles data privacy head-on, shardding deep neural networks sequentially across consumer hardware. No longer confined to data centers, inference distributes seamlessly, enabling large-scale models on edge devices. PolyLink’s decentralized platform crowdsources LLM development, enforcing integrity through TIQE while token incentives align heterogeneous nodes.

These innovations form the bedrock of scalable on-chain intelligence. ThreatFedChainAI integrates quantum-inspired optimization for IoT threat detection, blending federated learning with blockchain for privacy-preserving decisions. Meanwhile, AI-based anomaly detection frameworks optimize CNNs via neural architecture search, delivering pinpoint accuracy in transaction analysis despite resource constraints.

Noise Reduction Mechanics in Verifiable Pipelines

Blockchain data brims with artifacts: spam, wash trading, oracle drifts. Scalable inference pipelines excise this noise through layered verification. Consider how Proof of Inference at Inference Labs ensures Inference Labs data integrity: ZK proofs validate model execution without exposing inputs, filtering anomalous outputs at source.

AutoDFL’s reputation model dynamically weights contributors, sidelining noisy inputs to amplify signal fidelity. Hybrid sharding partitions computations, mitigating single-point failures and variance in node quality. PolyLink’s tokenomics reward high-fidelity inference, creating self-regulating markets that evolve toward precision.

In practice, these pipelines yield compounded benefits. Anomaly detection frameworks, compressed for blockchain constraints, flag irregularities with transfer learning, preserving computational thrift. ThreatFedChainAI’s explainable analytics demystify decisions, fostering trust in high-stakes IoT deployments. From my vantage optimizing medium-term trends, integrating such pipelines into inference staking portfolios hedges against data volatility, unlocking superior risk-adjusted returns.