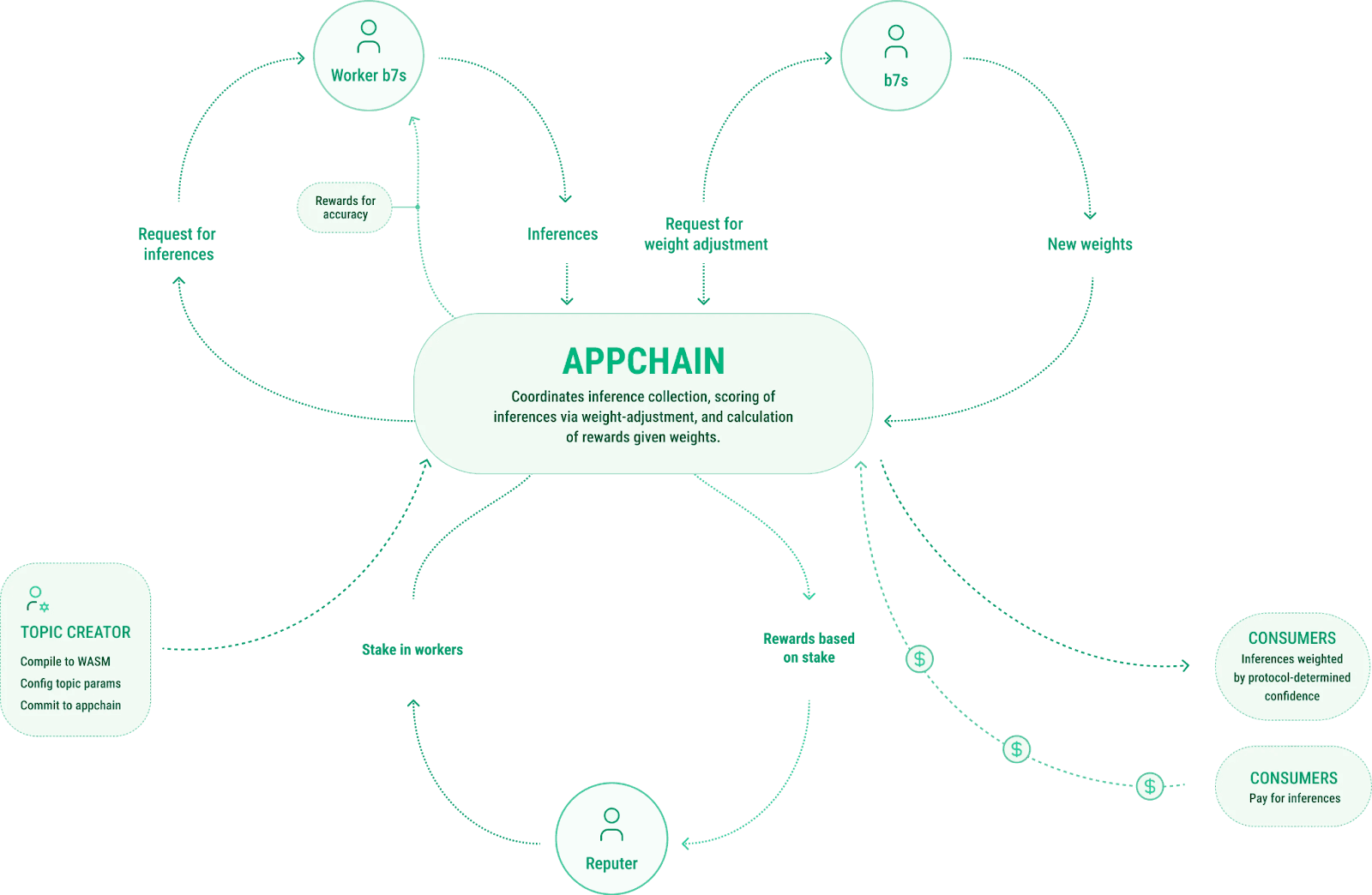

Distributed Validators Securing AI Inference Outputs in Trustless Markets

In the evolving landscape of decentralized inference markets, the integrity of AI outputs stands as a paramount concern. As protocols scale to handle complex workloads like provably fair trading and fraud detection on DeFi platforms, traditional centralized verification falters under trust assumptions. Enter distributed validators: a sophisticated cadre of nodes that collectively attest to AI inference results, forging trustless inference security through cryptographic consensus and staking incentives. This paradigm shift, propelled by innovators like Inference Labs, redefines how global networks secure AI without single points of failure.

These decentralized AI validators operate by executing models in parallel, generating zero-knowledge proofs, and aggregating attestations on-chain. Miners or validators submit predictions alongside verifiable computations, ensuring outputs resist tampering. Inference Labs exemplifies this with their Verified Inference Network, leveraging partnerships to embed zkML into prediction markets and beyond.

Forging Trust Through Verifiable Compute Partnerships

Strategic alliances underscore the momentum. Inference Labs’ collaboration with Cysic delivers scalable decentralized compute for ZK and AI workloads, merging ASIC-powered hardware with a verifiable framework to slash costs and boost performance. This tandem addresses perennial bottlenecks in decentralized AI, where verifiability often compromises speed.

Inference Labs partners with VXVHub to integrate verifiable AI in prediction markets, using zkML for tamper-proof, reliable AI outputs.

Similarly, integrations with EigenLayer amplify security by resting stakes on AVS (Actively Validated Services), creating a robust backbone for on-chain AI. Looking ahead to 2026 trends, GenLayer’s partnerships with LibertAI and Aleph Cloud pioneer confidential inference validated at the edge, while Gaia extends this to on-chain apps. These moves position inference validation staking as a yield-generating mechanism, attracting stakers to underwrite AI reliability.

Mechanisms Powering AI Proof Validators

At the core, AI proof validators employ swarm intelligence and lightweight protocols. Platforms like Mira Network deploy decentralized output verification, cross-checking inferences across nodes to flag discrepancies. Cortensor’s Proof of Inference mechanism quantifies model fidelity, rewarding honest computation while slashing malicious actors via slashing conditions.

Key Benefits of Distributed Validators

-

Enhanced Security: Distributed validators eliminate single points of failure, as seen in GenLayer’s partnerships with LibertAI and Aleph Cloud for confidential, blockchain-secured AI inference.

-

Staking Yields: Participants earn rewards by securing AI outputs, mirroring EigenLayer’s AVS model integrated with Inference Labs’ verifiable inference network.

-

Scalability for AI Workloads: Enables massive parallel processing, exemplified by Cysic and Inference Labs’ ASIC-powered compute for ZK and AI tasks.

-

Resistance to Sybil Attacks: Broad node distribution and proofs like Proof of Inference in Mira Network and Cortensor thwart malicious replication.

-

Oracle-Independent Trust: Verifiable execution via zkML and swarm intelligence in Gaia and Fortytwo protocols bypasses traditional oracles.

Fortytwo and VeriLLM push boundaries further, with swarm-based validation achieving public verifiability at superior speeds. Unlike brittle oracles, these systems distribute risk, ensuring no validator dominates consensus. Hyperbolic’s novel solutions tackle efficiency head-on, proving that trust, speed, and verifiability coexist in decentralized setups.

Staking Incentives in Trustless Inference Security

Inference validation staking emerges as the economic glue. Validators bond tokens to participate, earning yields from inference fees while facing penalties for invalid proofs. This aligns incentives, mirroring Ethereum’s proof-of-stake but tailored for compute-intensive AI tasks. Inference Labs’ model, building beyond oracles, quietly crafts a new DeFi trust layer.

Users benefit from tamper-proof outputs in high-stakes environments, from autonomous trading to self-managing defense systems. As networks like Gaia democratize model execution on personal hardware, distributed validators ensure collective honesty prevails. The result? A resilient ecosystem where AI inference thrives sans black boxes.

Yet this economic alignment demands more than mere token bonds; it requires precision-engineered slashing mechanisms that discern malice from honest errors in high-dimensional AI spaces. Inference Labs’ approach, drawing from their Zero-Knowledge Verified Inference Network, sets a benchmark by anchoring proofs to EigenLayer’s shared security, minimizing capital fragmentation while maximizing uptime.

Navigating Verifiability Challenges with Innovation

Decentralized AI validators confront formidable hurdles: computational overhead from zkML proofs, latency in swarm consensus, and sybil resistance amid heterogeneous hardware. Hyperbolic’s practical solutions cut through this fog, optimizing inference pipelines to balance trustless inference security with real-world throughput. Their emphasis on efficiency resonates deeply; after all, a verifiable system that’s too slow undermines its own purpose in fast-paced DeFi arenas.

Fortytwo’s swarm intelligence flips the script, distributing validation across lightweight nodes to achieve public verifiability without centralized chokepoints. VeriLLM complements this with streamlined frameworks that verify large language models at the edge, slashing proof generation times by orders of magnitude. These AI proof validators don’t just check boxes; they redefine feasibility, proving that decentralized oracle alternatives can outpace legacy systems in both speed and reliability.

Mira Network’s output verification layers in redundancy, where nodes cross-audit inferences and stake on consensus, creating a self-policing web. Cortensor elevates this via Proof of Inference, a quantifiable metric that ties rewards directly to computational honesty. Slashing isn’t punitive; it’s evolutionary, weeding out underperformers to elevate the network’s collective intelligence.

Edge Applications and Scalable Deployments

Picture autonomous trading bots on DeFi platforms, their decisions ratified by distributed validators in milliseconds. Inference Labs envisions this reality, extending to fraud detection and compliance where a single faulty output could cascade into millions in losses. Self-managing defense systems, too, gain from this rigor, with AI outputs secured against adversarial inputs through provable execution.

Gaia’s model, allowing everyday hardware to host inferences, democratizes access while distributed validators enforce trust. Fast-forward to 2026: GenLayer’s tie-ups with LibertAI and Aleph Cloud fuse confidential compute with blockchain validation, ideal for privacy-sensitive apps. Their Gaia integration brings scalable, verifiable AI to on-chain worlds, from NFT generation to dynamic governance.

Comparison of Key Protocols for Distributed Validators in AI Inference

| Protocol | Core Technology | Security Mechanism | Key Partnerships | Main Applications |

|---|---|---|---|---|

| Inference Labs | zkML Proofs | EigenLayer Security | Cysic, VXVHub | Provably fair trading, fraud detection, prediction markets |

| Mira Network | Output Verification | Sybil Resistance | N/A | Decentralized AI output verification |

| Cortensor | Proof of Inference | Staking Yields | N/A | Trustworthy AI inference with staking rewards |

| GenLayer | Confidential Inference | Edge Validation | LibertAI, Aleph Cloud, Gaia | Scalable on-chain AI execution |

These aren’t isolated experiments; they’re interlocking pieces in a maturing infrastructure. Platforms tokenize compute, letting stakers earn from inference demand while protocols sidestep oracle pitfalls.

Portfolio Alpha in Inference Staking Yields

From a multi-asset vantage, inference validation staking gleams with asymmetric upside. Yields here compound from dual streams: inference fees and AVS restaking premiums. Unlike volatile equities, these offer medium-term convexity, buffered by slashing insurance and overcollateralization. I’ve allocated selectively to such primitives, capturing alpha from networks where security bootstraps adoption.

Risk abounds, granted – node centralization whispers, proof finality lags – but mitigations proliferate. Cysic’s ASIC integration with Inference Labs tackles hardware disparities, while VXVHub’s prediction market pilots stress-test resilience. Stakers who grasp these dynamics position for the inference boom, where tokenized compute rivals cloud giants on cost and sovereignty.

The alchemy of distributed validators transmutes raw AI power into bankable trust. As markets mature, protocols wielding these tools will dominate, rewarding early visionaries with enduring yields. In trustless realms, verification isn’t optional; it’s the ultimate moat.