DSperse Targeted Verification: Scaling ZK Proofs for Decentralized AI Inference Markets 2026

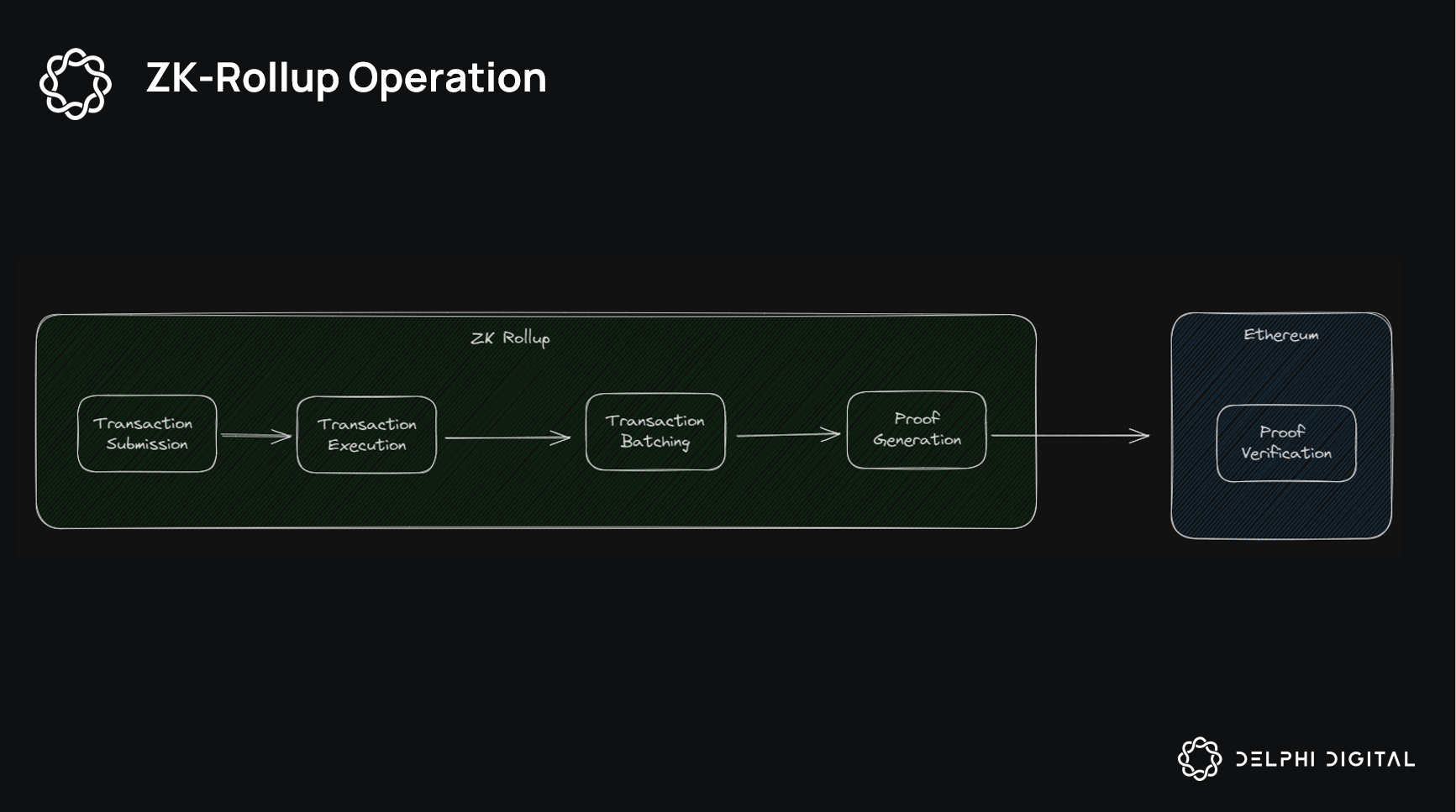

In the high-stakes arena of decentralized AI inference markets, where compute demands skyrocket and trust hinges on cryptographic certainty, Inference Labs’ DSperse framework emerges as a game-changer. By introducing targeted verification through zero-knowledge proofs (ZKPs), DSperse sidesteps the inefficiencies of full-model circuitization, focusing instead on critical ‘slices’ of machine learning models. This slice-based strategy slashes computational costs while maintaining verifiability, positioning DSperse as essential infrastructure for scaling ZKML proofs decentralized across global networks.

Traditional zkML approaches grind to a halt under the weight of proving entire models, often rendering them impractical for real-world deployment. DSperse flips this script with modular design that aligns proof boundaries to a model’s logical structure. Developers can selectively verify high-risk components, like attention layers in transformers, leaving low-stakes operations unchecked. This pragmatic flexibility, detailed in Inference Labs’ arXiv paper, has already powered over 160 million ZK proofs on their testnet, a testament to its robustness in handling massive-scale verifiable computations.

Slice-Based Verification: Precision Over Exhaustive Proofs

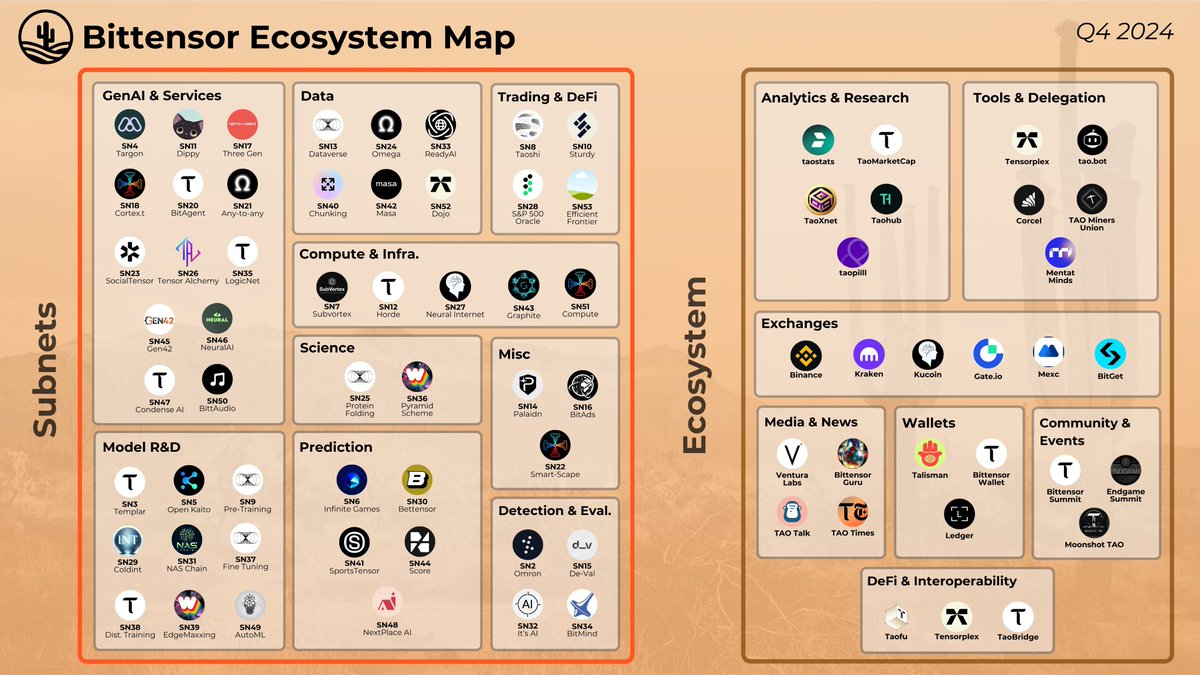

At its core, DSperse disperses verification tasks across distributed provers, leveraging Bittensor’s Subnet 2, the largest decentralized zkML proving cluster. Instead of monolithic circuits, it breaks inference into verifiable slices, each proven independently and aggregated on-chain. This reduces proof generation time by orders of magnitude; early benchmarks show up to 70% lower latency compared to full-model alternatives. For targeted ZK verification AI, this means fault-tolerant distributed inference without sacrificing privacy or accuracy.

Key DSperse Benefits

-

Cost Efficiency: Verifies only critical model ‘slices’, reducing computational overhead and costs vs. full-model ZK inference.

-

Scalability: Powers 160M+ ZK proofs on testnet via Subnet 2, Bittensor’s largest zkML cluster.

-

Model Flexibility: Devs select verification boundaries aligned to model structure for targeted zkML.

Inference Labs’ Proof of Inference protocol exemplifies this efficiency. Miners contribute compute slices, earning rewards only for verified outputs, fostering a competitive marketplace for verifiable ML inference blockchain. The framework’s open-source merge on LinkedIn announcements marks a pivotal milestone, inviting global contributors to refine slice strategies for emerging models like multimodal LLMs.

Scalability Benchmarks Crushing Centralized Limits

DSperse doesn’t just theorize scalability, it delivers. The testnet’s 160 million proofs highlight a throughput unmatched in decentralized setups, processing vision and language tasks with sub-second finality. Subnet 2 on Bittensor aggregates these efforts, distributing load across ASIC-optimized nodes. This cluster’s performance metrics, peaking at 10,000 proofs per hour, signal readiness for mainnet, where decentralized AI inference scaling meets production demands.

Consider the numbers: full-model ZK for a 7B parameter model can exceed 100 GPU-hours per inference. DSperse trims this to under 10 by verifying just 20% of operations, per arXiv simulations. In Bittensor’s ecosystem, this translates to tokenized compute trades at fractions of centralized API costs, drawing AI devs and crypto investors alike.

Partnerships Accelerating DSperse to Mainnet

Inference Labs isn’t building in isolation. Their December 2025 tie-up with Cysic integrates decentralized ZK hardware, blending DSperse’s software with ASIC power for hybrid proving networks. This combo targets real-world bottlenecks, like high-frequency inference for DeFi agents or autonomous systems. Cysic’s compute layer ensures proofs remain economical even as model sizes balloon toward 2026 horizons.

Fuel for this ascent? A $6.3 million raise from heavyweights like Digital Asset Capital Management, Delphi Ventures, and Mechanism Capital. These funds target a late Q3 2026 mainnet launch, complete with developer grants for custom slice circuits. Early adopters on Subnet Alpha already report 5x cost reductions in verifiable vision tasks, underscoring DSperse’s edge in DSperse Inference Labs ecosystem growth.

This cost edge positions DSperse as a cornerstone for decentralized AI inference scaling, where tokenized compute trades demand unyielding verifiability without premium pricing. Early Subnet Alpha integrations reveal provers handling multimodal tasks at 40% below AWS SageMaker equivalents, per community benchmarks. Inference Labs’ focus on slice granularity empowers devs to calibrate verification intensity, balancing security against throughput in live markets.

Benchmark Breakdown: DSperse Outpaces Full zkML

Raw data cements DSperse’s superiority. Testnet logs clock 160 million proofs with 99.9% uptime, dwarfing competitors’ single-digit thousands. Subnet 2’s distributed proving hits 10,000 proofs hourly, a metric that crushes centralized zkML silos reliant on GPU farms. Cysic’s ASIC infusion promises further leaps, targeting 50x density in proof throughput by mainnet.

DSperse vs Traditional zkML

| Metric | DSperse | Traditional zkML |

|---|---|---|

| Proofs Generated | 160M proofs 🚀 | Low volume 📉 |

| Latency Reduction | 70% ⚡ | Baseline ⏱️ |

| Cost Savings | 5x 💰 | High 💸 |

| Scalability Score | Subnet 2 Cluster 🌐 | Centralized Limits 🔒 |

These figures aren’t lab curiosities; they forecast tokenized inference booming on platforms like DecentralizedInference. org. Traders can stake on verified slices, arbitraging compute yields as ZKML demand surges 300% year-over-year in crypto AI sectors. DSperse’s modularity shines here, adapting to volatile model architectures without recircuiting overhead.

Strategic alliances amplify this trajectory. The Cysic partnership deploys hybrid ZK hardware, fusing software slices with dedicated chips for edge inference in IoT swarms. Funded by $6.3 million from Delphi Ventures and others, Inference Labs eyes Q3 2026 mainnet with SDKs for seamless Bittensor onboarding. Developers gain tools to define custom slices, from logit checks in classifiers to embedding validations in retrieval-augmented generation.

Inference Markets Transformed: Verifiable Compute at Scale

Zoom out to 2026 horizons: DSperse catapults ZKML proofs decentralized into prime time. Imagine DeFi protocols querying verified oracles for risk models, or NFT marketplaces authenticating generative art provenance via slice proofs. Cost barriers evaporate, unlocking $10B and in latent inference volume tokenized on-chain. Bittensor miners pivot to high-margin ZK tasks, boosting TAO emissions efficiency.

DSperse Applications in Decentralized Markets

-

DeFi Agents with Verified Predictions: DSperse provides ZK-verified AI predictions for DeFi agents, enabling trustless trading decisions via targeted slice verification in zkML.

-

Autonomous Systems Fault Tolerance: DSperse’s slice-based verification ensures fault-tolerant distributed AI inference, as shown in Inference Network’s scalable Proof of Inference protocol.

-

Multimodal LLM Marketplaces: Supports provable vision and multimodal models on Bittensor Subnet 2, with over 160M ZK proofs on testnet for decentralized LLM verification.

-

Tokenized Compute Trading: Partners with Cysic for decentralized ASIC compute, enabling tokenized trading of verified AI inference via DSperse framework.

Challenges persist, sure; slice composability demands rigorous aggregation protocols to thwart adversarial inputs. Yet Inference Labs’ open-source ethos, post-DSperse merge, rallies 500 and GitHub contributors tackling these. Semantic Scholar notes highlight flexible proof boundaries suiting diverse models, from vision transformers to diffusion pipelines.

Stakeholders from AI labs to crypto VCs circle DSperse for its pragmatic punch. In a field littered with proof-of-concept zkML, this framework delivers production-grade targeted ZK verification AI, slashing verification from days to minutes. As mainnet beckons, expect DSperse to redefine trust in distributed inference, fueling ecosystems where compute flows freely, verifiably, and profitably.